The Leading AI-Inspired

Perception Company

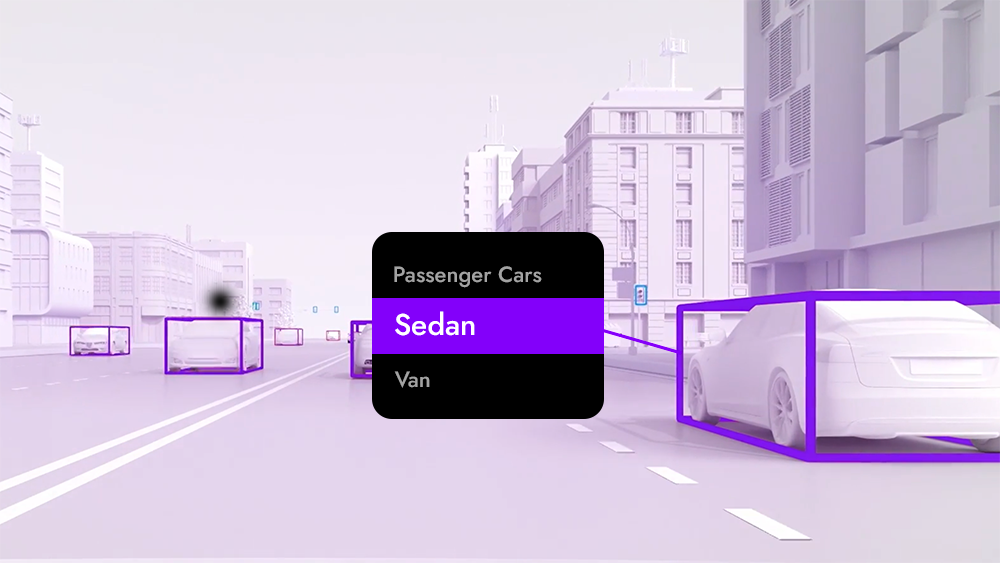

The Leading AI-Inspired Perception Company STRADVISION is an industry leader in object recognition technology, accelerating its pace of popularizing fully self-driving vehicles. With more than 300 employees worldwide, we are advancing every day with our expertise in deep learning, embedded platforms, and advanced algorithms.

SVNet,

Cutting Edge AI Technology

SVNet is our core building block that provides a complete deep learning perception software utilizing data from camera sensors. It delivers high level of detection accuracy while consuming less hardware resources, enabling our customers to achieve high-performance and high-efficiency on thier choice of SoC.